Varnish is a great tool to have if you need a scriptable proxy server. For most sites, though, this will be more complex than necessary. Check out this article on how to speed up WordPress with Varnish.

I propose that most users don’t need the power of VCL, though. They’d be much better off with a high performance proxy server with RFC 2616 compliance and simple configuration.

Allow me to play devil’s advocate and show you how to build and configure Apache Traffic Server, then show you benchmark results:

# built on centos 6.2 # Get traffic server pre-requisites yum install gcc-c++ openssl-devel tcl-devel expat-devel pcre-devel make # Get traffic server wget http://apache.tradebit.com/pub/trafficserver/trafficserver-3.1.3-unstable.tar.bz2 tar -xf trafficserver-3.1.3-unstable.tar.bz2 cd trafficserver-3.1.3-unstable # Build traffic server ./configure make -j 8 # Install traffic server make install ln -s /usr/local/bin/trafficserver /etc/init.d/trafficserver chkconfig --add trafficserver chkconfig trafficserver on # Configure traffic server URL map echo "map http://YOURDOMAIN.COM http://localhost" >> /usr/local/etc/trafficserver/remap.config echo "reverse_map http://localhost http://YOURDOMAIN.COM" >> /usr/local/etc/trafficserver/remap.config # Run traffic server trafficserver restart

Note: Traffic Server runs on port 8080. I used my host’s router to map port 80 -> 8080 and left httpd on port 80.

Since traffic server is now handling all incoming requests, the web server logs will be unreliable. You can rely on an in-browser analytics package, or see how to configure Traffic Server for combined logs.

Configuration done

Let’s benchmark.

Note: my test site is using W3 Total Cache. W3TC sets the cache-control, last-modified, and expires headers on the pages, and static content so that traffic server knows how to best cache your content. You can also use the Varnish integration in W3TC with traffic server. This will purge pages from the traffic server proxy whenever you purge them from W3TC.

Protip: If you need to have Traffic Server work without an expires header or cache-control header, look in records.config and find this section:

# required headers: three options: # 0 - No required headers to make document cachable # 1 - "Last-Modified:", "Expires:", or "Cache-Control: max-age" required # 2 - explicit lifetime required, "Expires:" or "Cache-Control: max-age" CONFIG proxy.config.http.cache.required_headers INT 2

Look at blitz.io. I used this command in the blitzbar:

# If you paid for blitz, you can max out at 1,000 concurrent users # -p 1-1000:5,1000-1000:55 -r california -H 'Accept-encoding: gzip' http://YOURDOMAIN.COM/ -p 1-250:1,250-250:59 -r california -H 'Accept-encoding: gzip' http://YOURDOMAIN.COM/

For these benchmarks, I paid to upgrade blitz.io to allow 1,000 concurrent users. At 250 concurrent users, the performance profiles of these systems didn’t really shine through.

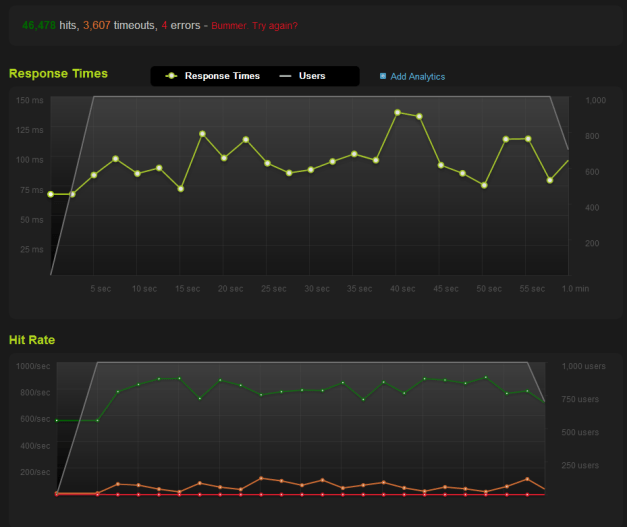

Here’s the Varnish benchmark.

Here’s the Traffic Server benchmark:

For comparison, here’s Apache HTTPD alone:

Notice how Varnish doesn’t like the initial influx of connections. Notice how Varnish had more timeouts, too. Notice, too, how much of a difference these caching systems make. Remember, the Apache HTTPD benchmark is already using WordPress + W3 Total Cache.

Let’s look at apachebench for a different view. Using this command, I benchmarked raw Apache HTTPD vs. Varnish vs. Traffic Server. This is all done locally over a loopback connection.

# Change the port to 80 to benchmark apache directly ab -c 250 -n 1000000 -k -H "Host: YOURDOMAIN.COM" http://127.0.0.1:8080/

Apache results (no chance!):

apr_socket_recv: Connection timed out (110) Total of 17725 requests completed

Varnish results:

Time taken for tests: 95.049 seconds Complete requests: 1000000 Failed requests: 0 Requests per second: 10520.83 [#/sec] (mean) Time per request: 23.762 [ms] (mean) Time per request: 0.095 [ms] (mean, across all concurrent requests) Transfer rate: 75257.95 [Kbytes/sec] received

Traffic Server results:

Time taken for tests: 50.971 seconds Complete requests: 1000000 Failed requests: 0 Requests per second: 19619.00 [#/sec] (mean) Time per request: 12.743 [ms] (mean) Time per request: 0.051 [ms] (mean, across all concurrent requests) Transfer rate: 139371.67 [Kbytes/sec] received

Conclusion

According to blitz.io, Varnish and Traffic Server benchmark results are close. According to ab, Traffic Server is twice as fast as Varnish. Configuration is different in each package. Traffic Server has some unique features like the ability to act as as a forward proxy and a reverse proxy, the ability to proxy SSL connections, a plugin architecture, and will soon have SPDY support. If you haven’t heard of Traffic Server yet, you should check it out. If you’re not using a caching proxy, please start using one!

For more information on Apache Traffic Server, check out this 2011 USENIX presentation (PDF, 10.4 MB) by Leif Hedstrom

puttung all lines on one chart would make them more readable:P

I want to know how to do this on blitz.io, too.

Thanks man,

It’s a valuable article

Just in case you someone wants an RPM, the Fedora teams are building RPMS for Fedora, RHEL and CentOS (use EPEL)

Very nice article, probably the easiest ATS install I’ve seen!

I have a concern thought and haven’t seen it elsewhere:

Your config works great for one domain on the server/vps but how would you manage lots of domains without having to do it manually?

I would be interested in such a remap.config tweak…

You can use the regex_remap plugin to do this. Check out the documentation on the site here. If you’re stumped, I recommend jumping in to the IRC chat room (freenode #traffic-server) and asking the guys there, they’re very helpful.

OK…THX

Here’s a one liner that handles multiple domains sent to one backend server (like a virtual hosting apache box):

regex_map http://(%5Ba-z\-0-9\.]+) http://my.other.box.com:8080/

We have this line last so we can pick off some things first before falling through to the catch-all regex rule.

Hi I am wondering your tutorial.

I set ATS to listen port 80 and apache 10080

When I put this:

map http://domain.com http://domain.com:10080

reverse_map http://domain.com:10080 http://domain.com

it’s works

I would like use ATS to handle multiple domains without individual config per domain but when I set

regex_map http://(a-z\-0-9\.]+) http://my_server_ip:10080

it does not work

How can I setup it?

I haven’t worked with multiple domains and regex_map personally. The best place to find support for ATS is in the IRC room on irc.freenode.net in #traffic-server

Check your Apache (httpd) logs, I suspect that you will see a virtual hosting error because your site is expecting http://www.site.com and getting http://www.site.com:10080 after the rewrite, which has no match in Apache. If nothing is making it through, then concentrate on ATS.

Hello Thank you very much for usefull article.

At least i found new things for me)

Successfully installed it on Debian Squeeze and worked like charm for virtual hosts without any regex_ stuff.(This is not production server just 4 fun)

before installation just make sure you have bind DNS server:

root@debian:/usr/local/etc/trafficserver# uname -a

Linux debian 2.6.32-5-686 #1 SMP Sun Sep 23 09:49:36 UTC 2012 i686 GNU/Linux

root@debian:/usr/local/etc/trafficserver# grep -r ‘192.168.0.15’ *

records.config:CONFIG proxy.config.dns.nameservers STRING 127.0.0.1 192.168.0.15

root@debian:/usr/local/etc/trafficserver# tail -n 20 remap.config

#

# Regex support: Regular expressions can be specified in the rules with the

# following limitations:

#

# 1) Only the host field can have regexes – the scheme, port and other

# fields cannot.

# 2) The number of capturing sub-patterns is limited to 9;

# this means $0 through $9 can be used as substitution place holders ($0

# will be the entire input string)

# 3) The number of substitutions in the expansion string is limited to 10.

#

map http://hacker1.own http://hacker1.own:10080

reverse_map http://hacker1.own:10080 http://hacker1.own

map http://my.hack http://my.hack:10080

reverse_map http://my.hack:10080 http://my.hack

root@debian:/usr/local/etc/trafficserver# ls -lias /etc/apache2/sites-enabled/

total 8

34912 4 drwxr-xr-x 2 root root 4096 Jan 12 14:16 .

34148 4 drwxr-xr-x 9 root root 4096 Dec 31 02:19 ..

35374 0 lrwxrwxrwx 1 root root 30 Dec 23 08:51 hacker1.own -> ../sites-available/hacker1.own

35443 0 lrwxrwxrwx 1 root root 36 Jan 12 14:16 my.hack -> /etc/apache2/sites-available/my.hack

root@debian:/usr/local/etc/trafficserver# less /etc/bind/named.conf.default-zones

// prime the server with knowledge of the root servers

zone “.” {

type hint;

file “/etc/bind/db.root”;

};

// be authoritative for the localhost forward and reverse zones, and for

// broadcast zones as per RFC 1912

zone “localhost” {

type master;

file “/etc/bind/db.local”;

};

zone “192.168.0.15” {

type master;

file “/etc/bind/db.local”;

};

zone “hacker1.own” {

type master;

file “/etc/bind/db.local”;

};

zone “my.hack” {

type master;

file “/etc/bind/db.local”;

};

root@debian:/tmp# cat -n /etc/apache2/htdocs/hack/me.php

1

root@debian:/tmp# curl my.hack/me.php

Currently We Served : my.hack

root@debian:/tmp# curl http://hacker1.own/me.php

Currently We Served : hacker1.own

Everything ok for me.

Once Again Huge Thanks to author of this article!

Cheers.